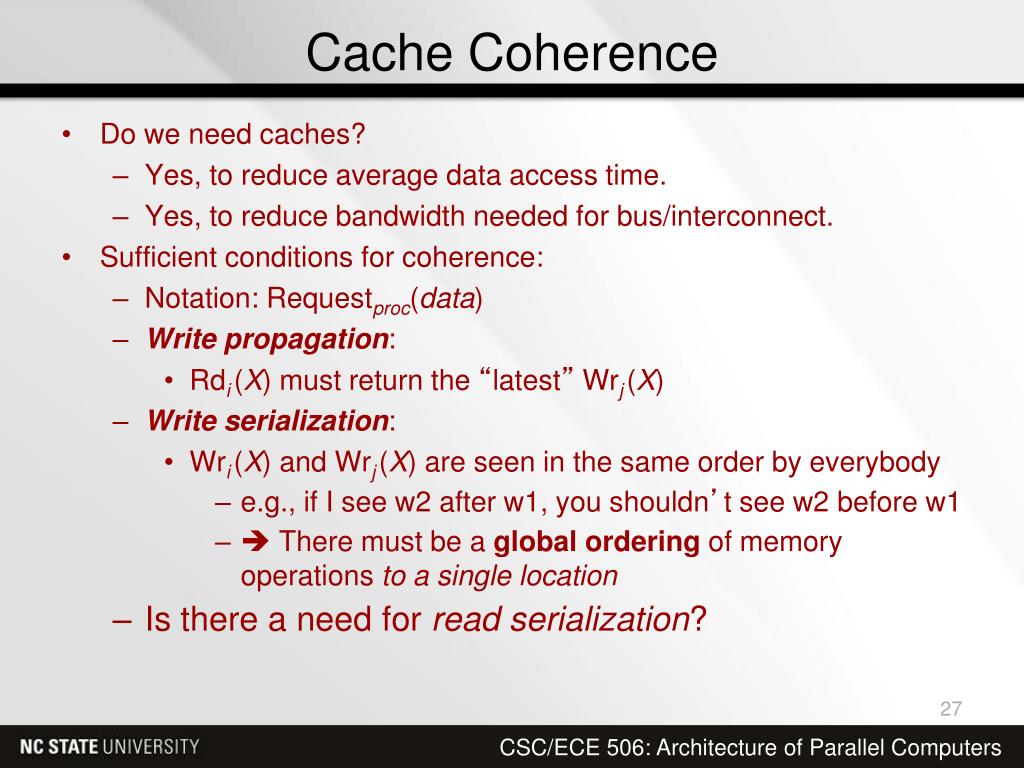

With a write-invalidate protocol, there can be multiple readers but only one write at a time. Two basic approaches to the snoopy protocol have been explored: Write invalidates or write- update (write-broadcast)

Snoopy protocols are ideally suited to a bus-based multiprocessor, because the shared bus provides a simple means for broadcasting and snooping. When an update action is performed on a shared cache line, it must be announced to all other caches by a broadcast mechanism.Įach cache controller is able to “snoop” on the network to observed these broadcasted notification and react accordingly. Snoopy protocols distribute the responsibility for maintaining cache coherence among all of the cache controllers in a multiprocessor system.Ī cache must recognize when a line that it holds is shared with other caches. The controller then issues a command to the processor holding that line that requires the processors to do a write back to main memory.ĭirectory schemes suffer from the drawbacks of a central bottleneck and the overhead of communication between the various cache controllers and the central controller. When another processor tries to read a line that is exclusively granted to another processors, it will send a miss notification to the controller. The controller maintains information about which processors have a copy of which lines.īefore a processor can write to a local copy of a line, it must request exclusive access to the line from the controller.īefore granting thus exclusive access, the controller sends a message to all processors with a cached copy of this time, forcing each processors to invalidate its copy.Īfter receiving acknowledgement back from each such processor, the controller grants exclusive access to the requesting processor. It is also responsible for keeping the state information up to date, therefore, every local action that can effect the global state of a line must be reported to the central controller. When an individual cache controller makes a request, the centralized controller checks and issues necessary commands for data transfer between memory and caches or between caches themselves. The directory contains global state information about the contents of the various local caches. Typically, there is centralized controller that is part of the main memory controller, and a directory that is stored in main memory. Hardware schemes can be divided into two categories: directory protocol and snoopy protocols.ĭirectory protocols collect and maintain information about where copies of lines reside. Because the problem is only dealt with when it actually arises, there is more effective use of caches, leading to improved performances over a software approach. Hardware solution provide dynamic recognition at run time of potential inconsistency conditions. The compiler then inserts instructions into the generated code to enforce cache coherence during the critical periods. More efficient approaches analyze the code to determine safe periods for shared variables. It is only during periods when at least one process may update the variable and at least one other process may access the variable then cache coherence is an issue This is too conservative, because a shared data structure may be exclusively used during some periods and may be effectively read-only during other periods. The simplest approach is to prevent any shared data variables from being cached. So, there are some more cacheable items, and the operating system or hardware does not cache those items. Leading to inefficient cache utilization.Ĭompiler-based cache coherence mechanism perform an analysis on the code to determine which data items may become unsafe for caching, and they mark those items accordingly. On the other hand, compile time software approaches generally make conservative decisions.

In software approach, the detecting of potential cache coherence problem is transferred from run time to compile time, and the design complexity is transferred from hardware to software.

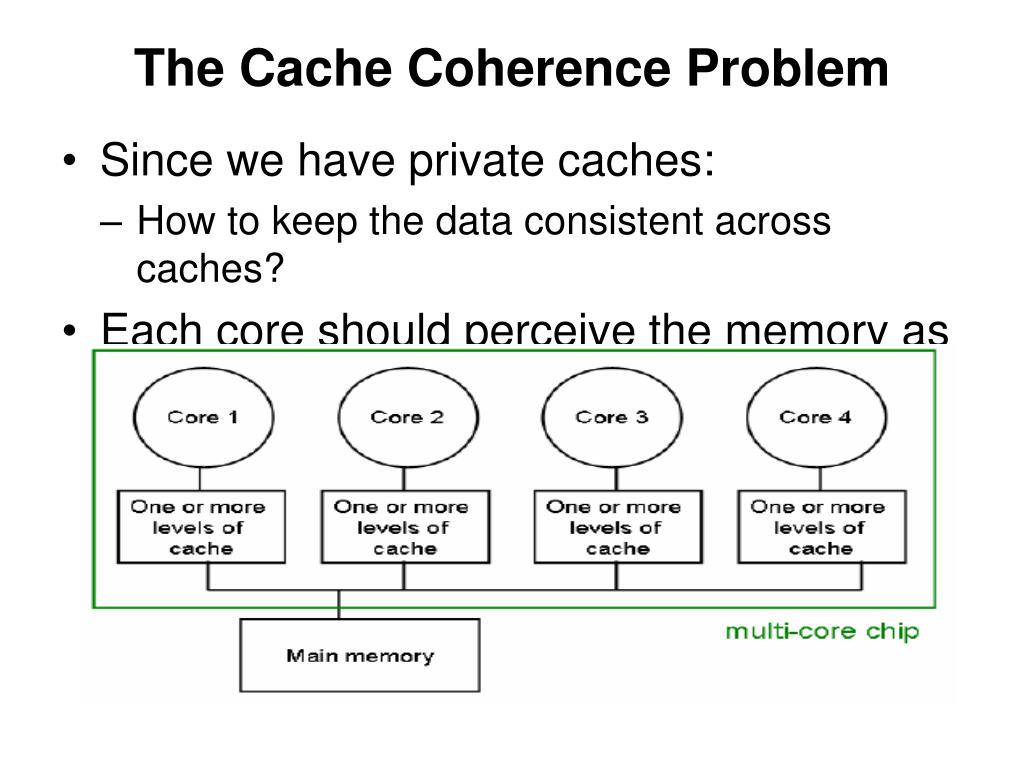

The modified block is written to memory only when the block is replaced. Write-back - when data is written to a cache, a dirty bit is set for the affected block. Write-through - all data written to the cache is also written to memory at the same time. There are two general strategies for dealing with writes to a cache: The main problem is dealing with writes by a processor. Cache coherence refers to the problem of keeping the data in these caches consistent. For higher performance in a multiprocessor system, each processor will usually have its own cache.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed